Spend five minutes on Hacker News and you will witness the same two-act play on a permanent loop. One camp insists that the software engineering profession is essentially obsolete. The other claims these tools are little more than a glorified autocomplete that generates more bugs than features.

As a developer who has spent more time refactoring legacy monoliths than I would like to admit, I find this binary discourse exhausting. We are currently stuck in a reality gap. On one side, we have marketing promises of 10x productivity. On the other, we have the messy, context-heavy world of professional software architecture.

I have been watching the recent inquiries into how AI is actually performing in the wild. There is a documented, urgent demand from the engineering community to cut through the noise. We do not need more hype. We need granular, case-study-style evidence. We need to know how these tools handle a complex microservices architecture or a finicky C++ codebase, not just how they generate a boilerplate React component for a weekend project.

The Binary Trap and the Search for Evidence

The current discussion is failing us because it relies on anecdotal extremes. When a developer says AI is useless, they might be trying to use it on a proprietary stack with zero public documentation. When another says it is transformative, they might be working on greenfield projects where the logic is standard and the libraries are well-worn. Without empirical data, we are just shouting across a fence.

Measuring productivity is notoriously difficult in our field. It has never been about lines of code written. If it were, we would all be getting bonuses for failing to refactor. True efficiency is measured by the time spent debugging, the cognitive load of context-switching, and the long-term maintainability of the system. According to current professional sentiment, we are struggling to determine if AI tools provide genuine utility or if they simply introduce a new layer of workflow complexity that negates any speed gains.

The Hidden Tax of AI Assistance

There is a hidden tax associated with AI-assisted coding that the brochures rarely mention. I call it the verification burden. When an AI generates a block of code, the developer’s role shifts from writer to editor. On the surface, this looks like a win. However, auditing someone else’s code (or a machine’s code) often requires more mental energy than writing it yourself. You have to verify the logic, check for edge cases, and ensure it does not introduce security vulnerabilities or architectural regressions.

This shift from author to reviewer is fundamentally changing the developer experience. If you are a senior architect, you might have the domain knowledge to spot a hallucinated library call in seconds. But what happens to junior-level mentorship?

If we use AI to skip the easy stuff, we might be accidentally removing the very training wheels that juniors need to develop the intuition required for the hard stuff. We are essentially making a trade. We gain speed in the short term, but we potentially degrade the collective skill level of the team over the long haul.

Moving Toward a Pragmatic Framework

To move forward, we have to treat these tools with the same skepticism we apply to a new third-party dependency. We need to move from subjective rhetoric to professional experimentation.

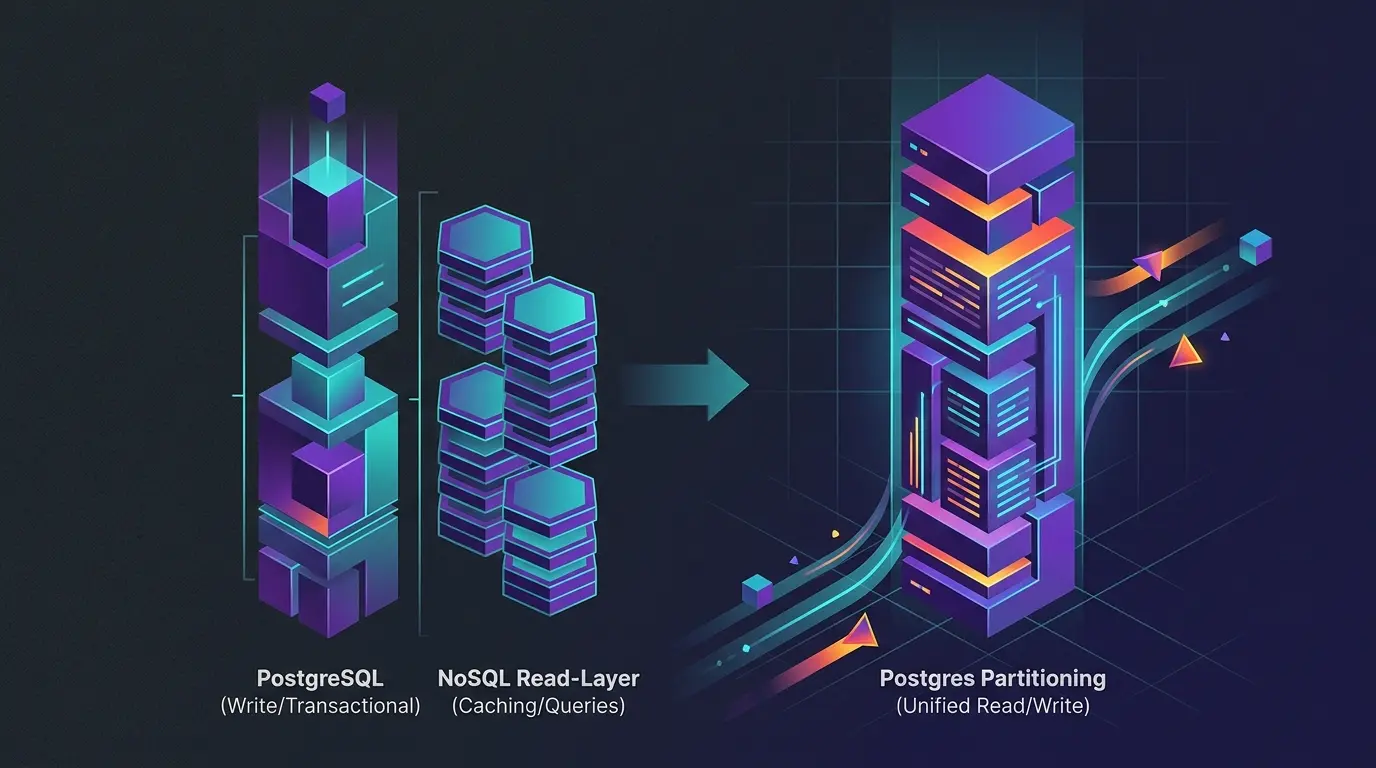

Some teams are already finding a middle ground by treating AI as a junior pair programmer rather than a replacement. It is great for generating unit tests or boilerplate, but you probably should not let it design your database schema or your security protocols.

Domain knowledge remains the final filter. An AI can suggest a thousand ways to solve a problem, but it does not understand your company’s specific business constraints or the weird legacy bug that has been haunting your production environment since 2018. The tool is only as good as the engineer steering it.

We are not seeing a total replacement of labor. Instead, we are seeing a shift in the nature of the labor required. The hard work of engineering has always been about problem-solving and system design, not just typing characters into an IDE. If AI eventually automates the easy 80 percent of coding, we have to ask ourselves a difficult question. Will we be left with a workforce capable of solving the complex 20 percent that remains? The answer will likely depend on whether we spend this era building better tools or just building better shortcuts.