The Intuition Gap

We’ve all bought into the myth of AI’s inevitable dominance. We watched Google’s AlphaGo methodically dismantle world champions and stared, half-terrified, as Large Language Models (LLMs) cruised through the bar exam. In the popular narrative, the machines have already won. But inside the labs, a much weirder, more humbling story is unfolding.

As it turns out, an AI can outplay a grandmaster at chess but will frequently trip over a simple logic puzzle that a bored ten-year-old could solve in thirty seconds.

This isn't a hardware problem. It isn't about needing a bigger dataset or more cooling fans. It’s about a fundamental disconnect that researchers are calling the “Intuition Gap.” It is the chasm between recognizing what a winning move looks like and actually understanding the mathematical logic that makes it work. According to recent reports, when a game requires a player to intuit a specific mathematical function, even our most "brilliant" models get completely flummoxed.

The Pattern Matching Illusion

To understand why a billion-dollar model can’t solve a simple riddle, you have to look at how these systems actually "think." Whether we’re talking about Reinforcement Learning agents or the LLMs currently dominating your Twitter feed, these systems are essentially world-class mimics.

They are statistical savants. If you show an AI ten million poker hands, it learns the precise probability of a winning flush. But it doesn't "know" the rules of probability in any conceptual sense; it just recognizes the shape of success.

This is the "Black Box" problem. An AI can mimic a winner perfectly as long as the environment looks exactly like its training data. But the moment a game requires actual deductive reasoning—the ability to look at a set of outcomes and realize, “Oh, the hidden rule here is that X always equals Y plus two”—the system collapses.

It’s the digital equivalent of a student who memorizes every answer key in the back of a math textbook. They can recite the answers with 100% accuracy, but the second the teacher changes a "4" to a "5" in the equation, the student is lost. They never learned the math; they just learned the patterns.

Where the Machine Hits the Wall

The failure happens right at the transition from massive data crunching to abstract, rule-based puzzles. When an environment demands high-level mathematical abstraction, the AI’s speed becomes a liability. It can process a billion moves per second, but if it can't deduce the abstract rule governing those moves, it’s just spinning its wheels in a very expensive dark room.

I’ve spent years watching tech demos where AI agents perform minor miracles in simulated worlds. But there is a recurring theme: as soon as you introduce a variable that requires a "leap" of logic—something not explicitly spelled out in the training set—the performance drops off a cliff.

The data suggests this is a systemic issue, though we’re still debating which specific architectures are the most fragile. Is this a universal limitation of neural networks, or just a bottleneck in how we’re feeding them information? The jury is still out.

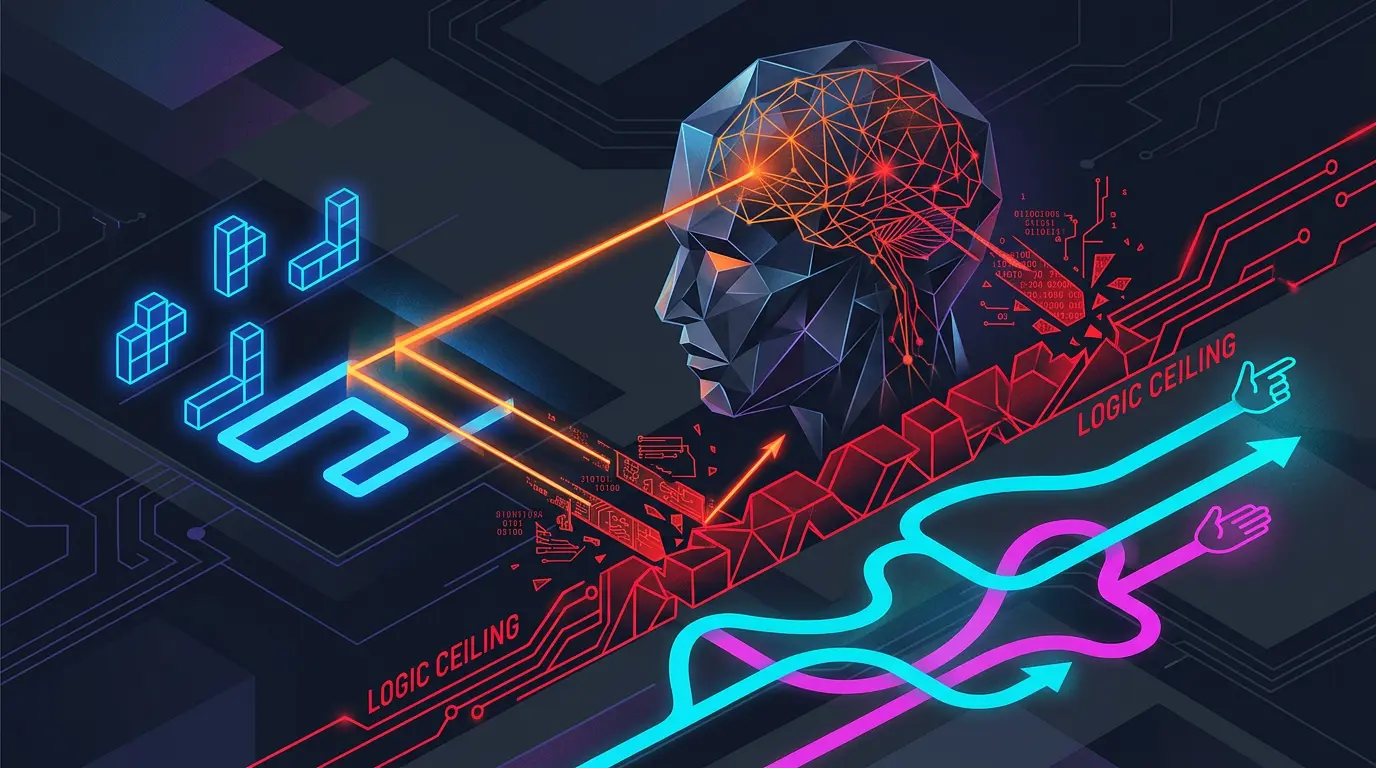

The Logic Ceiling

This brings us to the “Ceiling” hypothesis, and it’s a direct challenge to Silicon Valley’s current obsession with scaling.

The industry mantra has been simple: more parameters, more GPUs, more data, and the machine will eventually "wake up" and understand logic. But this research suggests we might be hitting a hard limit. Pattern-based systems might simply be incapable of bridging the gap to true logical deduction, no matter how much compute power we throw at them.

Think of it like building a taller and taller ladder to reach the moon. You’re making visible progress, sure. You’re higher than anyone has ever been. But at some point, you have to face the fact that a ladder is the wrong tool for the job. You don’t need more rungs; you need a rocket.

If this ceiling is real, the stakes go way beyond gaming. Scientific research, cryptography, and complex engineering all depend on understanding the underlying mechanics of a system, not just predicting the next likely outcome based on past events. If an AI can’t "intuit" a basic mathematical function in a game, can we ever trust it to discover a new law of physics?

Beyond Pattern Recognition

So, what’s the pivot? Some researchers are already moving toward "neuro-symbolic" AI. It’s an attempt to marry the gut-level pattern recognition of neural networks with the hard, rule-based logic of old-school symbolic AI. Essentially, it’s an effort to give the machine both an instinct and a calculator.

For now, we’re left with an uncomfortable question about the nature of intelligence itself. If our most advanced systems are fundamentally blind to the logic of the worlds they navigate, are they actually intelligent? Or have we just built increasingly sophisticated calculators that are very, very good at pretending they know the rules?

At some point, mimicry stops being enough. To get to the next level, the machine needs to stop looking at the patterns and start understanding the math.